How to design a Live Video Streaming service like Disney HotStar

Part 1: Designing for a single user.

I am a Journalist-turned-Software Engineer. I love coding and the associated grind of learning every day. A firm believer in social learning, I owe my dev career to all the tech content creators I have learned from. This is my contribution back to the community.

Note: We will cover a few fundamental concepts and then dive deep into designing a Live Stream service

Intro

As an avid cricket fan and a Disney+ Hotstar subscriber, there is not one live cricket match where my eyes have not gauged the live viewer counter, and my eyes are almost locked on the counter during tense moments in the game.

Even during those tense moments, as an engineer, I cannot stop marveling at the scale at which Hotstar+AWS+Akamai is operating.

The engineers who work on these solutions must be so proud to see the world enjoying the fruits of their labors.

Working on problems at this scale is what most engineers ( at least I) yearn for.

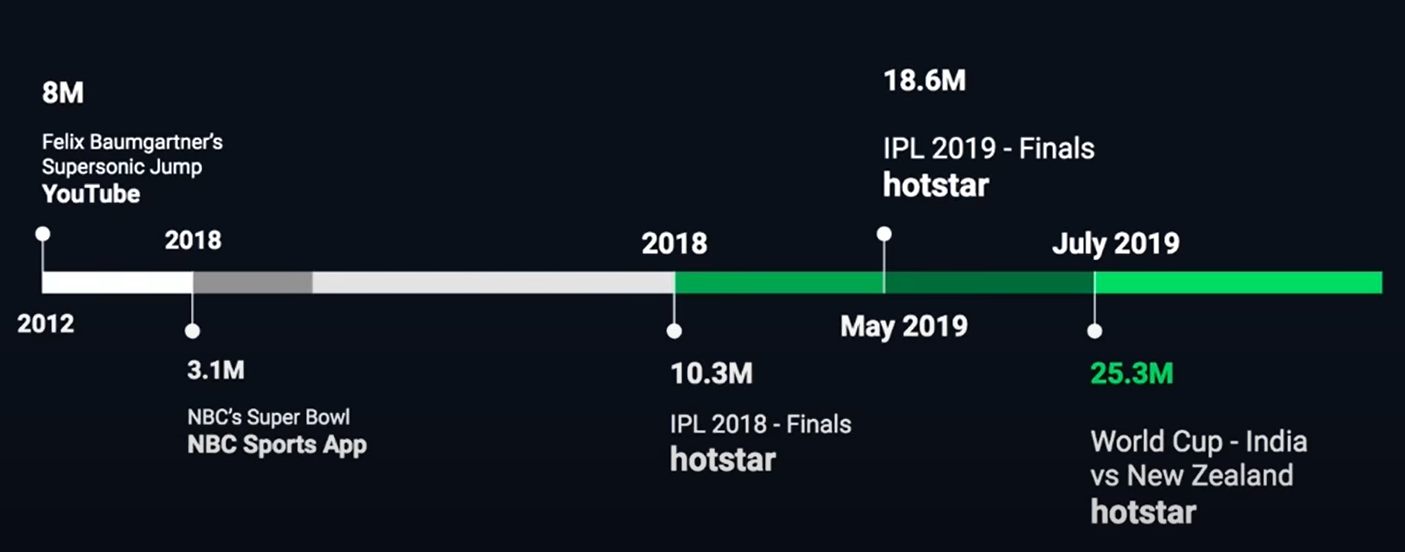

For readers who are wondering about the significance of this. Hotstar has been consistently serving 10M+ concurrent users seamlessly without any noteworthy glitches. In fact, in 2019, Hotstar(AWS + Akamai) systems served a record 2.53 crore users concurrently.

And this record is not far from being broken by another cricketing live event on the same platform.

The second best platform to operate at this scale of concurrency is youtube in 2013.

source: youtube

source: youtube

So what does it take to build a Live Streaming Service like Hotstar? Let's find out.

Functional Requirements

- Stream live sports(or any event) videos to global audiences on any device, any bandwidth, with low latency

- Users should be able to like and comment in real-time

- Store the entire video for later replay

Non-functional Requirements

- There should be no buffering, i.e., streaming without any lag

- Reliable system

- High Availability

- Scalability

Challenges

- Latency - keeping the delay as little as possible

- Scale - millions of users concurrently watching

- Multiple devices - different resolutions and bandwidths

Live Streaming Service for a single user

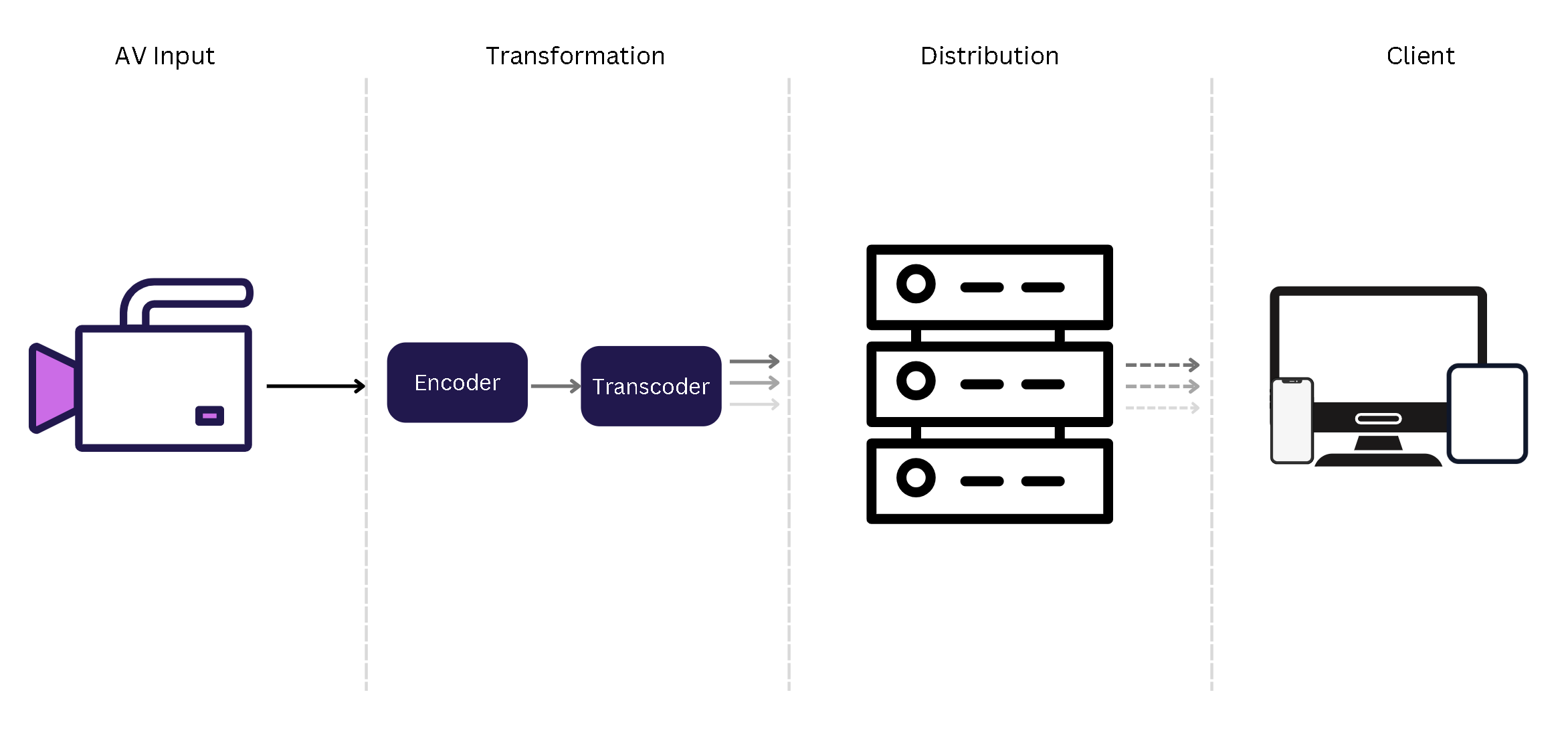

There are 4 entities to streaming

- Publishing - capturing the live feed

- Transforming - converting into multiple formats

- Distributing - transporting the video to the client

- Viewing - Watching the feed on the UI

Publishing

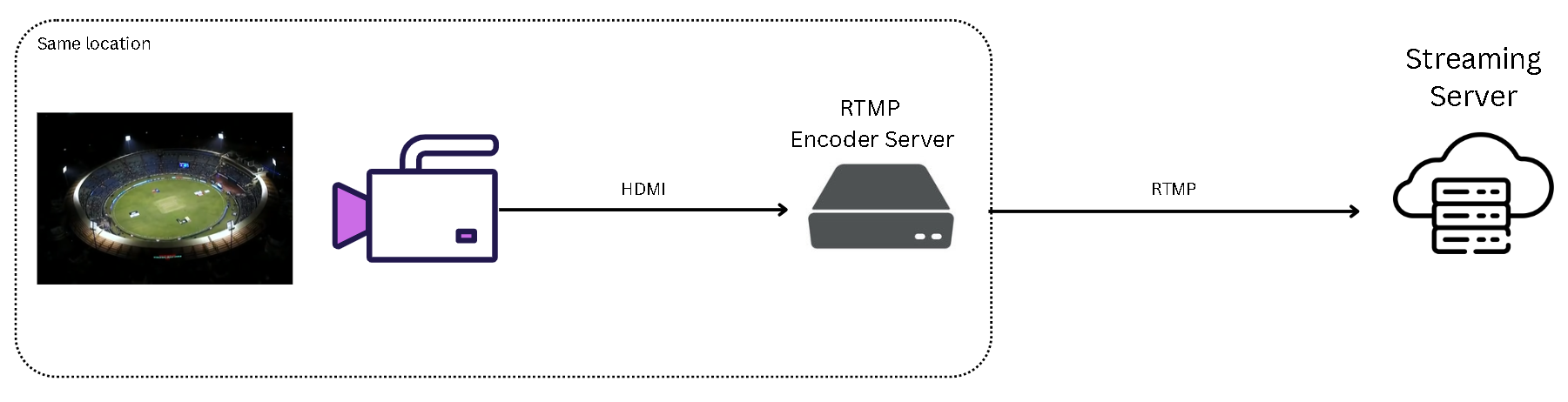

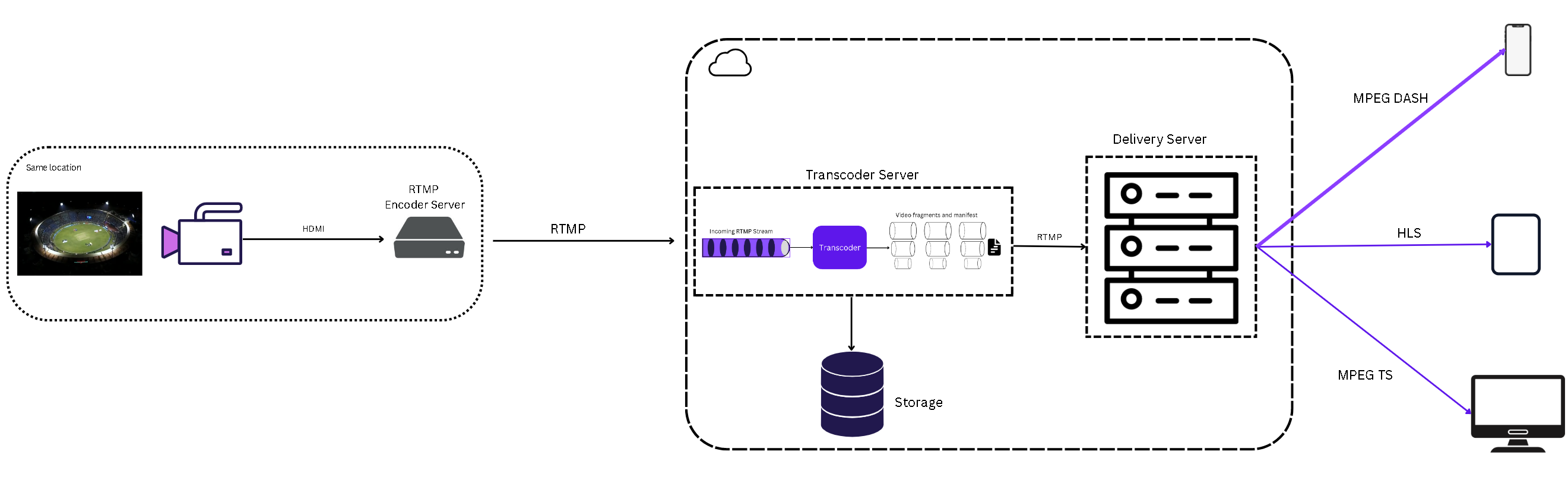

The first step in the publishing process is to acquire the camera feed. In the case of sporting events, the camera feed is usually backhauled to a central production facility via a satellite link or a dedicated high-speed Internet line.

The popular protocol used to deliver video feed to a streaming server is RTMP.

Real-Time Messaging Protocol (RTMP) is a TCP-based protocol designed to maintain persistent, low-latency connections while transmitting content from the source (encoder) to a hosting server.

The camera feed is pushed to an RTMP Encoder Server through HDMI. The encoder server converts the incoming video to the desired bitrate and codec - usually H.264 for video and AAC audio.

The encoder then sends the encoded video to the cloud server using RTMP.

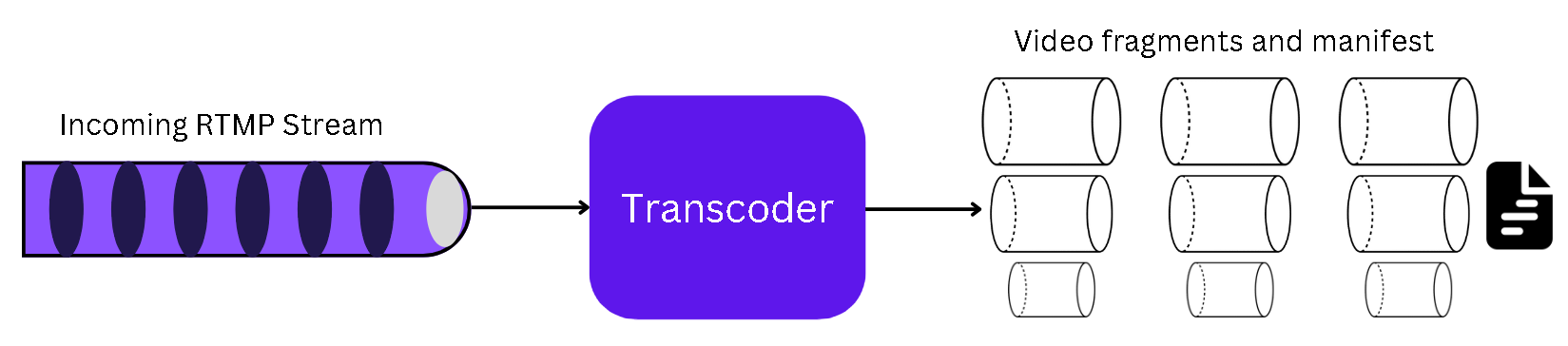

Transforming

One of the challenges in live streaming is streaming to different types of devices like mobiles, desktops, smart TVs, etc. For this, the ingested video, which is usually 8K/4K, is converted into different formats. This process of video conversion is called transcoding.

Essentially, the incoming RTMP stream is chunked into multiple bitrates for adaptive bitrate streaming.

It takes An 8K/4K video input stream and converts it into lower bitrate streams such as HD at 6Mbps, 3Mbps, 1Mbps, 600 kbps, etc.

The streaming server also pushes all the versions of the video to Amazon S3-like storage for later payback.

Transporting

The transcoder sends the chunked videos to a Delivery/Media server.

The web player on the client makes a connection with the media server and requests the live video. The media server, which in this case is more like an edge server, responds with the stream of the relevant video format the player requested.

Designing for more than 2 crore concurrent users

If you liked what you read, please subscribe for interesting articles on Blazor, Web Performance, Usability, and Frontend System Design.