How to Build an AI Agent Without Using Any Libraries: A Step-by-Step Guide

I am a Journalist-turned-Software Engineer. I love coding and the associated grind of learning every day. A firm believer in social learning, I owe my dev career to all the tech content creators I have learned from. This is my contribution back to the community.

Video Tutorial

Below is the video version of this tutorial.

Code Repository

The Context

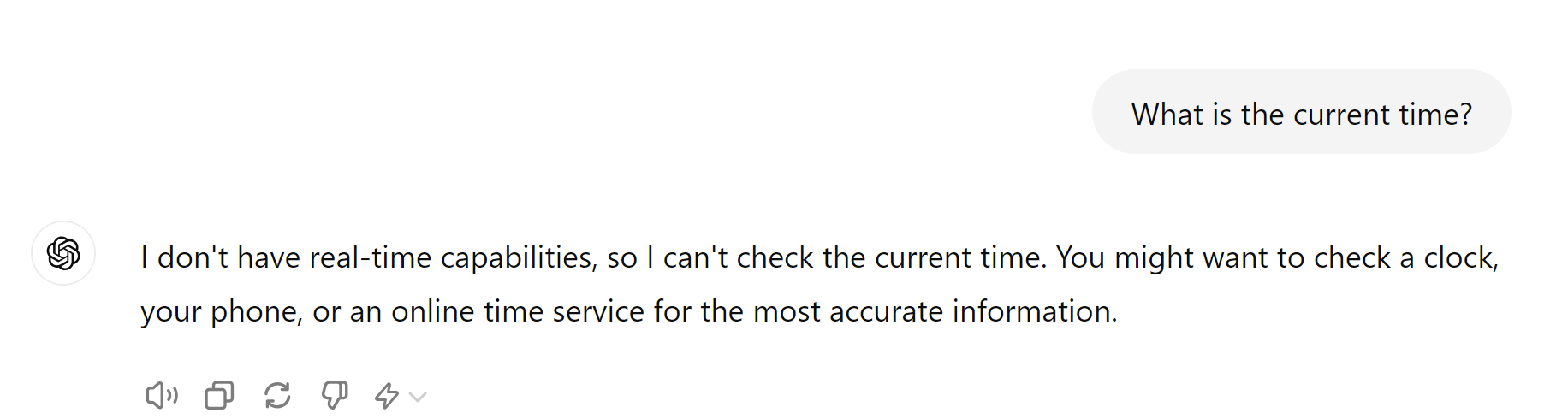

Try asking these questions to ChatGPT or Claude and you will see a response that looks like below.

We know LLMs cannot access real-time information - so let's use this as problem statement for our basic AI Agent.

Before we begin - let's understand the fundamental building blocks of an agent.

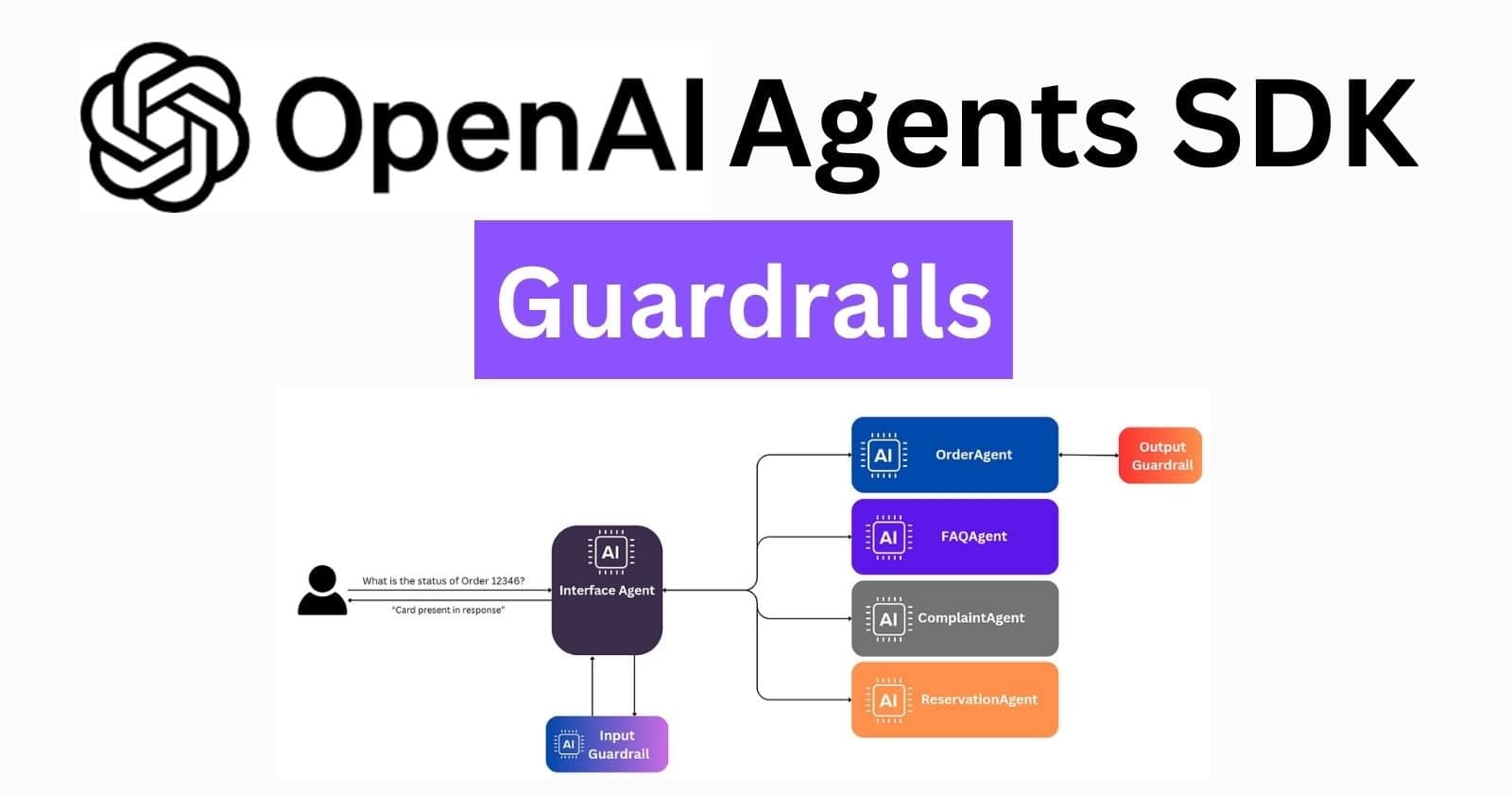

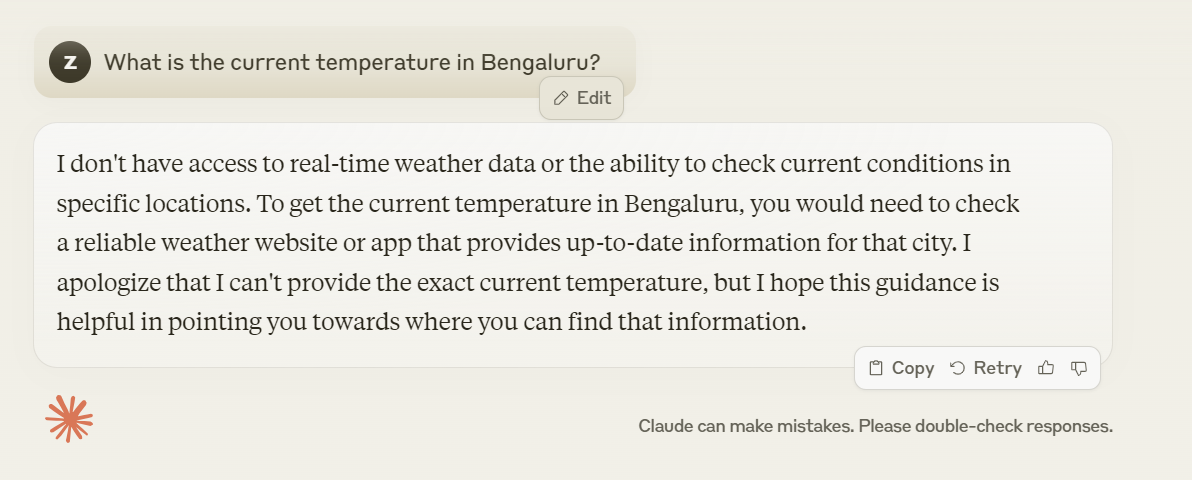

An agent essentially comprises three primary components - the Models, the Tool, and the Reasoning Loop.

The model refers to the Large Language Model (LLM) at the core of the AI agent. This is the foundation of the agent's intelligence and capabilities. The LLM is trained on vast amounts of text data, allowing it to understand and generate human-like text. It provides the agent with knowledge, language understanding, and the ability to process complex instructions.

Tools are specific functions or capabilities that the AI agent can use to interact with its environment or perform tasks

The reasoning loop is the iterative process that allows the agent to make decisions, solve problems, and accomplish tasks.

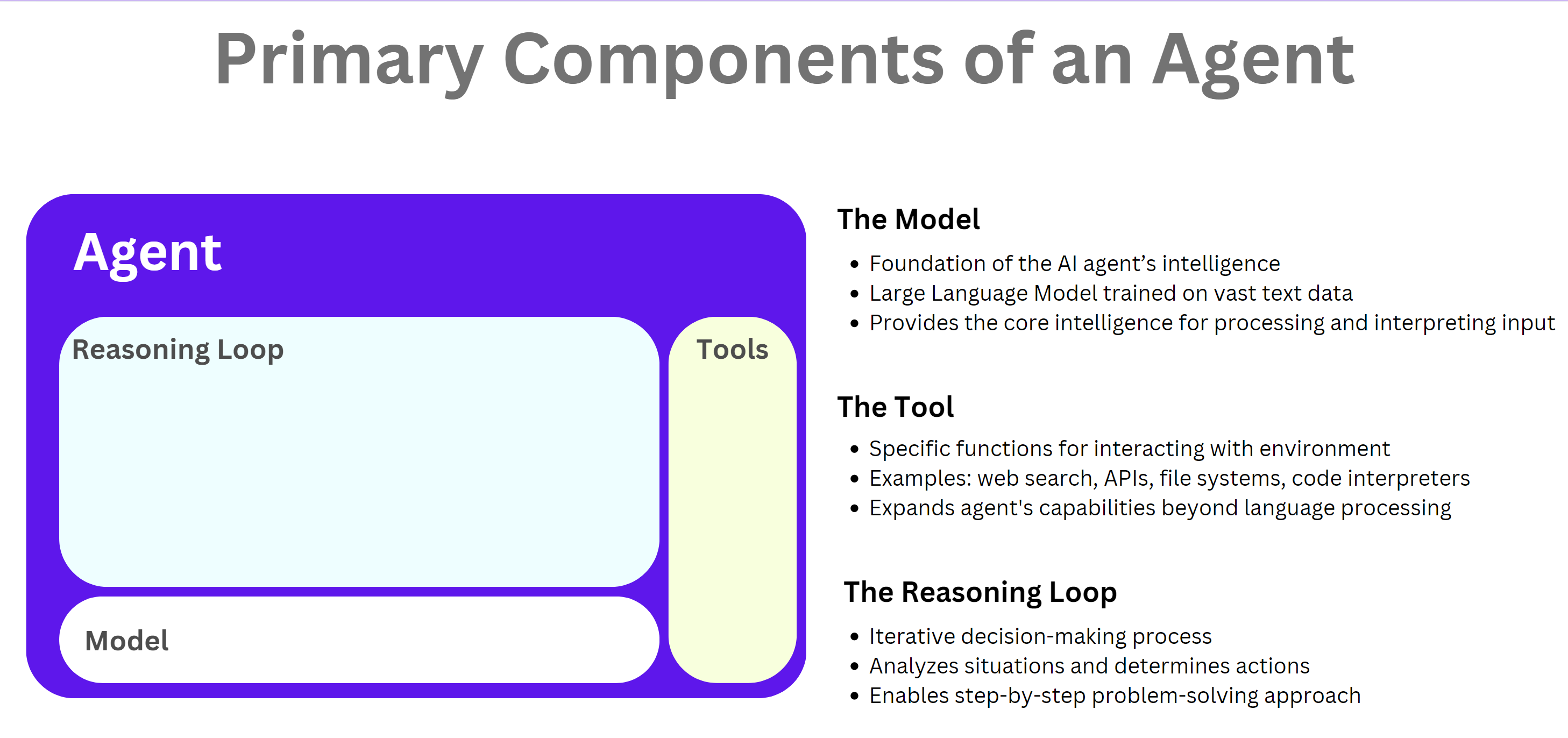

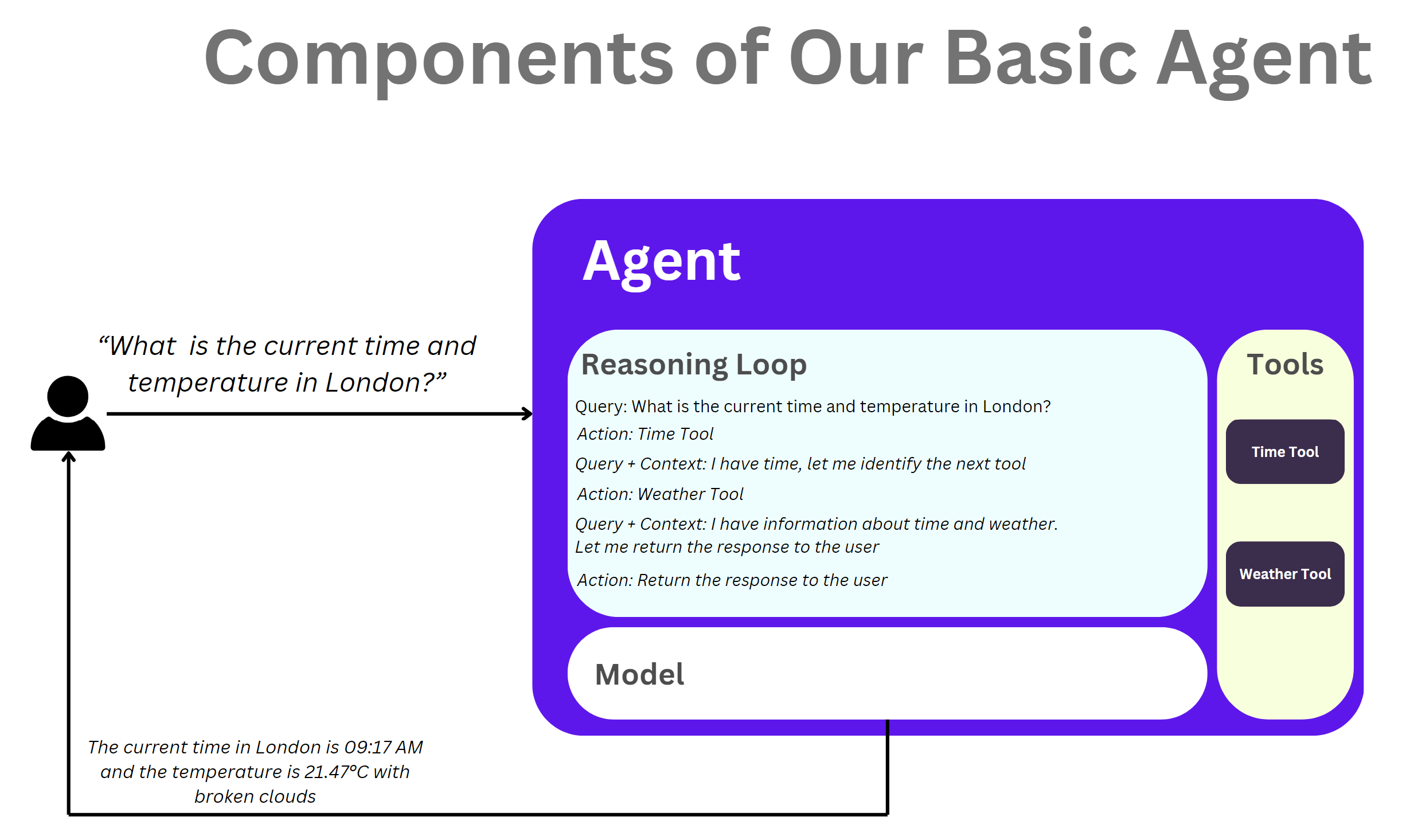

In the context of our use case, the following is how the 3 components would work together.

In this tutorial, we'll create a more advanced LLM agent that uses the concept of "tools" to perform various tasks. This approach allows for greater flexibility and easier expansion of the agent's capabilities.

Prerequisites

Basic Python programming knowledge

Understanding of LLMs and their capabilities

Python 3.7 or higher

Implementing the Tool-based Agent

Let's start by creating the tool interface. This will allow us to build tools that have to implement the required methods

import datetime

import requests

from zoneinfo import ZoneInfo

from abc import ABC, abstractmethod

class Tool(ABC):

@abstractmethod

def name(self) -> str:

pass

@abstractmethod

def description(self) -> str:

pass

@abstractmethod

def use(self, *args, **kwargs):

pass

Now, let's implement some tools:

class TimeTool(Tool):

def name(self):

return "Time Tool"

def description(self):

return "Provides the current time for a given city's timezone like Asia/Kolkata, America/New_York etc. If no timezone is provided, it returns the local time."

def use(self, *args, **kwargs):

format = "%Y-%m-%d %H:%M:%S %Z%z"

current_time = datetime.datetime.now()

input_timezone = args[0]

if input_timezone:

print("TimeZone", input_timezone)

current_time = current_time.astimezone(ZoneInfo(input_timezone))

return f"The current time is {current_time}."

class WeatherTool(Tool):

def name(self):

return "Weather Tool"

def description(self):

return "Provides weather information for a given location"

def use(self, *args, **kwargs):

location = args[0].split("weather in ")[-1]

api_key = userdata.get("OPENWEATHERMAP_API_KEY")

url = f"http://api.openweathermap.org/data/2.5/weather?q={location}&appid={api_key}&units=metric"

response = requests.get(url)

data = response.json()

if data["cod"] == 200:

temp = data["main"]["temp"]

description = data["weather"][0]["description"]

return f"The weather in {location} is currently {description} with a temperature of {temp}°C."

else:

return f"Sorry, I couldn't find weather information for {location}."

Now let's implement the all-important agent.

import requests

import json

import ast

class Agent:

def __init__(self):

self.tools = []

self.memory = []

self.max_memory = 10

def add_tool(self, tool: Tool):

self.tools.append(tool)

def json_parser(self, input_string):

python_dict = ast.literal_eval(input_string)

json_string = json.dumps(python_dict)

json_dict = json.loads(json_string)

if isinstance(json_dict, dict):

return json_dict

raise "Invalid JSON response"

def process_input(self, user_input):

self.memory.append(f"User: {user_input}")

self.memory = self.memory[-self.max_memory:]

context = "\n".join(self.memory)

tool_descriptions = "\n".join([f"- {tool.name()}: {tool.description()}" for tool in self.tools])

response_format = {"action":"", "args":""}

prompt = f"""Context:

{context}

Available tools:

{tool_descriptions}

Based on the user's input and context, decide if you should use a tool or respond directly.

Sometimes you might have to use multiple tools to solve user's input. You have to do that in a loop.

If you identify a action, respond with the tool name and the arguments for the tool.

If you decide to respond directly to the user then make the action "respond_to_user" with args as your response in the following format.

Response Format:

{response_format}

"""

response = self.query_llm(prompt)

self.memory.append(f"Agent: {response}")

response_dict = self.json_parser(response)

# Check if any tool can handle the input

for tool in self.tools:

if tool.name().lower() == response_dict["action"].lower():

return tool.use(response_dict["args"])

return response_dict

def query_llm(self, prompt):

api_key = userdata.get("OPENAI_API_KEY")

headers = {

"Content-Type": "application/json",

"Authorization": f"Bearer {api_key}"

}

data = {

"model": "gpt-4o-mini-2024-07-18",

"messages": [{"role": "user", "content": prompt}],

"max_tokens": 150

}

response = requests.post("https://api.openai.com/v1/chat/completions", headers=headers, data=json.dumps(data))

final_response = response.json()['choices'][0]['message']['content'].strip()

print("LLM Response ", final_response)

return final_response

def run(self):

print("LLM Agent: Hello! How can I assist you today?")

user_input = input("You: ")

while True:

if user_input.lower() in ["exit", "bye", "close"]:

print("See you later!")

break

response = self.process_input(user_input)

if isinstance(response, dict) and response["action"] == "respond_to_user":

print("Reponse from Agent: ", response["args"])

user_input = input("You: ")

else:

user_input = response

Finally, let's create the main script to run our tool-based agent:

from google.colab import userdata

def main():

agent = Agent()

# Add tools to the agent

agent.add_tool(TimeTool())

agent.add_tool(WeatherTool())

agent.run()

if __name__ == "__main__":

main()

How It Works

The

Agentclass manages the overall operation of the agent.Each tool is implemented as a separate class inheriting from the

Toolabstract base class.The agent checks if any tool can handle the user's input directly.

If no tool matches, the agent uses the LLM to decide whether to use a tool or respond directly.

The LLM is provided with context, including the conversation history and available tools.

Adding New Tools

To add a new tool to the agent, simply create a new class that inherits from Tool and implement the required methods. Then, add an instance of your new tool to the agent using the add_tool method.

Conclusion

You can further enhance this agent by:

Implementing more sophisticated tools

Adding error handling and input validation

Improving the LLM prompts for better tool selection

Implementing a more advanced memory system